AIPOCH vs FreedomAI: Which Disease Mechanism Agent Skill Performs Better?

A data-driven comparison of AIPOCH disease-mechanism-evidence-map and FreedomAI tooluniverse-multiomic-disease-characterization using MedSkillAudit.

Understanding disease mechanisms is one of the most important foundations of biomedical research. From identifying molecular pathways to linking cell types, tissues, and clinical phenotypes, mechanism analysis helps researchers move from observation to explanation.

As AI-powered medical research workflows become more common, agent skills are increasingly used to support disease mechanism analysis.

In this article, we compare two disease mechanism agent skills:

-

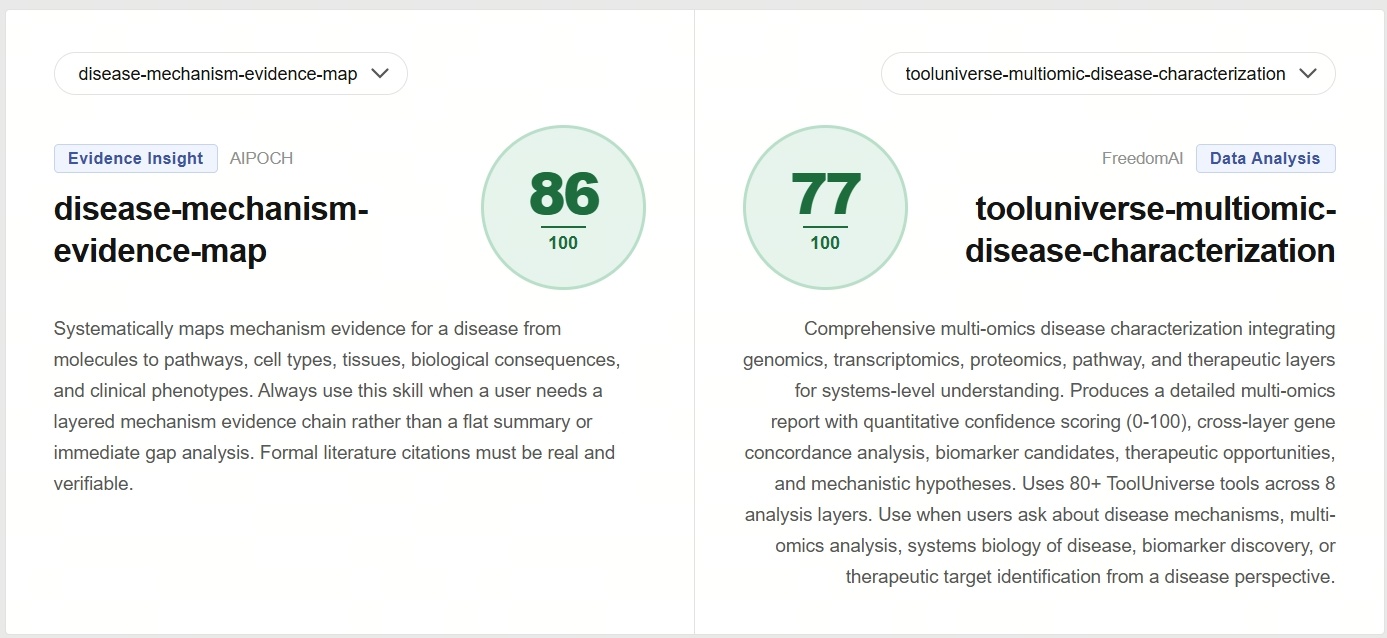

AIPOCH disease-mechanism-evidence-map

The Agent Skill Description: Systematically maps mechanism evidence for a disease from molecules to pathways, cell types, tissues, biological consequences, and clinical phenotypes. Always use this skill when a user needs a layered mechanism evidence chain rather than a flat summary or immediate gap analysis. Formal literature citations must be real and verifiable.

-

FreedomAI tooluniverse-multiomic-disease-characterization

The Agent Skill Description: Comprehensive multi-omics disease characterization integrating genomics, transcriptomics, proteomics, pathway, and therapeutic layers for systems-level understanding. Produces a detailed multi-omics report with quantitative confidence scoring, cross-layer gene concordance analysis, biomarker candidates, therapeutic opportunities, and mechanistic hypotheses.

How We Evaluated Agent Skill?

This comparison is not based on opinions or isolated examples.

We used AIPOCH MedSkillAudit, an evaluation framework designed to assess agent skills.

Both agent skills were tested under identical conditions: evaluated according to the standardized settings defined by MedSkillAudit.

Core Capability Section Results Analysis

The Core Capability section of MedSkillAudit is a static quality evaluation of the agent skill itself. At this stage, MedSkillAudit evaluates how well the agent skill is designed.

It covers eight key dimensions:

- Functional Suitability

- Reliability

- Performance & Context

- Agent Usability

- Human Usability

- Security

- Maintainability

- Agent-Specific Capability

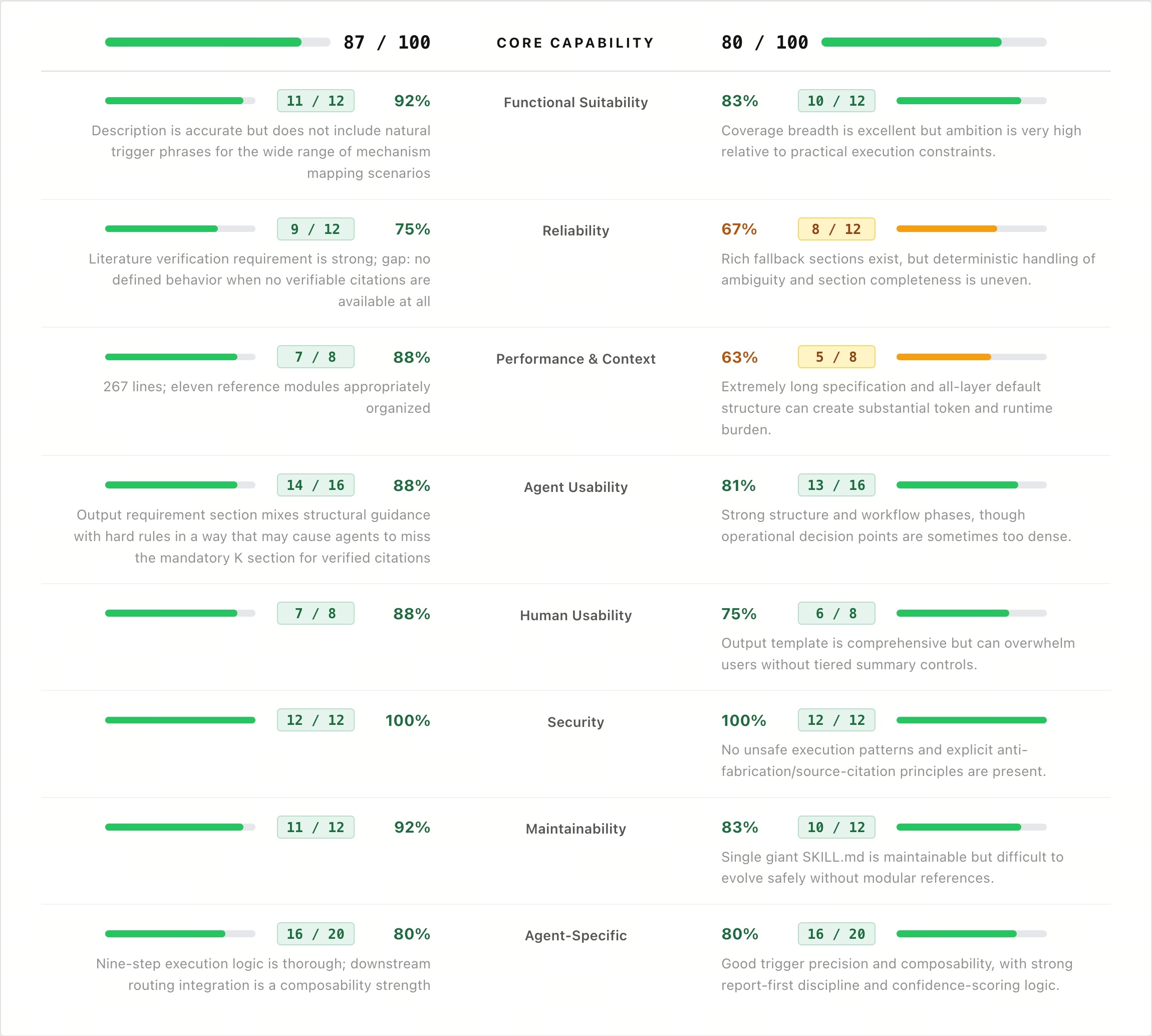

In this comparison, AIPOCH disease-mechanism-evidence-map achieved a Core Capability score of 87/100, while FreedomAI tooluniverse-multiomic-disease-characterization scored 80/100.

Although both skills show strong design quality, the scoring reveals clear differences in how each skill is structured.

AIPOCH performs particularly well in:

- Functional Suitability (92% vs 83%)

- Reliability (75% vs 67%)

- Performance & Context (88% vs 63%)

- Agent Usability (88% vs 81%)

- Human Usability (88% vs 75%)

- Maintainability (92% vs 83%)

Both skills perform equally well in:

- Security (100% vs 100%)

- Agent-Specific Capability (80% vs 80%)

One of the most noticeable differences in the Core Capability evaluation appears in the Reliability dimension. For AIPOCH disease-mechanism-evidence-map, Literature verification requirement is strong; gap: no defined behavior when no verifiable citations are available at all. For FreedomAI tooluniverse-multiomic-disease-characterization, Rich fallback sections exist, but deterministic handling of ambiguity and section completeness is uneven.

Medical Task Section Results Analysis

The Dynamic Evaluation section of MedSkillAudit measures how an agent skill performs during actual task execution.

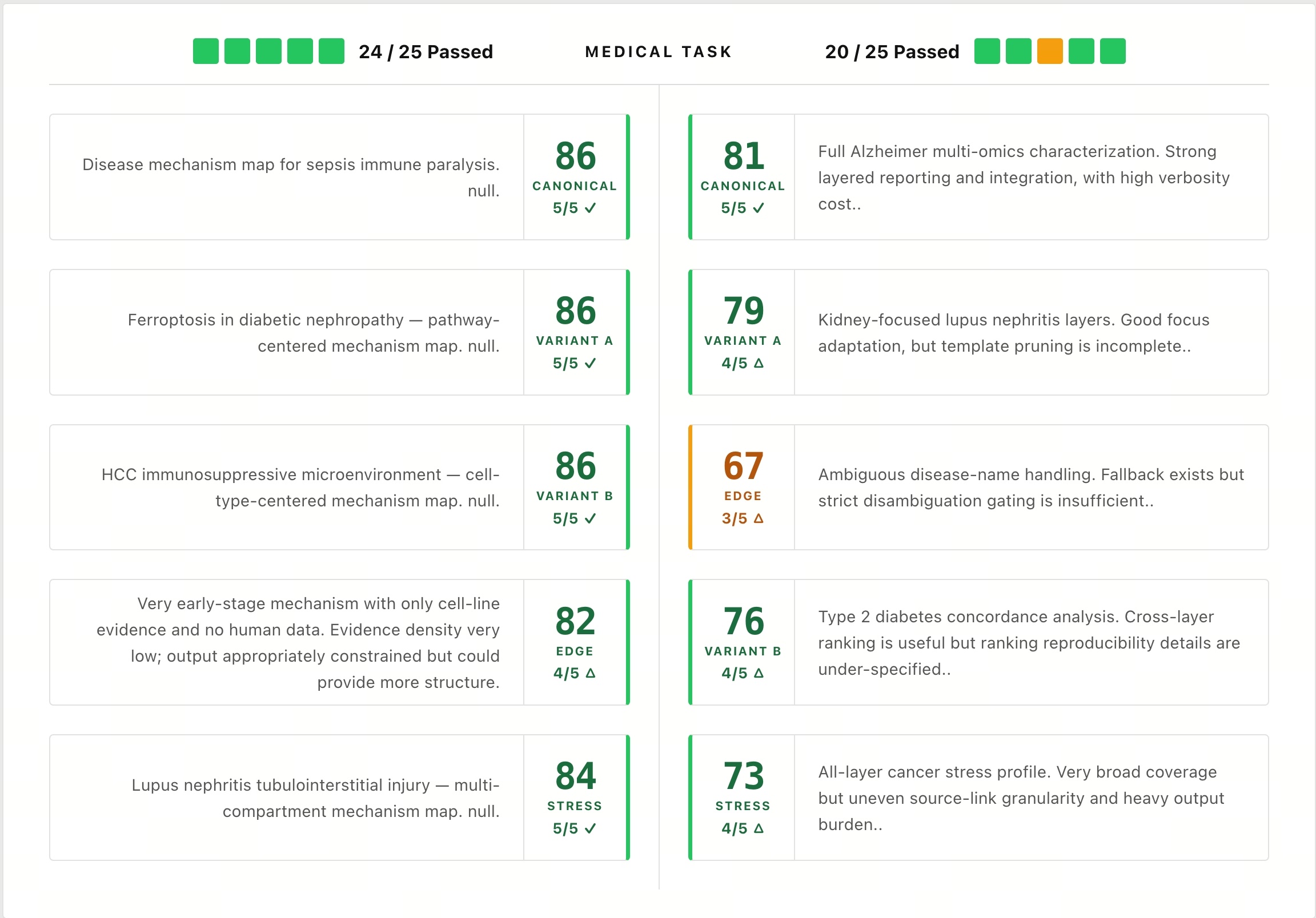

In this benchmark:

- AIPOCH disease-mechanism-evidence-map passed 24/25

- FreedomAI tooluniverse-multiomic-disease-characterization passed 20/25

Final Score Comparison

- AIPOCH disease-mechanism-evidence-map scored 86/100

- FreedomAI tooluniverse-multiomic-disease-characterization scored 77/100

This 9-point difference suggests that AIPOCH demonstrates stronger overall agent skill quality across both design architecture and execution performance.

In other words, AIPOCH is not only better structured at the skill level, but also performs more consistently when applied to real disease mechanism research tasks.

It is important to note that a higher score does not mean one skill should replace the other. The most suitable choice depends on specific research goals, task requirements, workflow preferences, and other practical considerations.

Use Case: Disease Mechanism Evidence Map

The AIPOCH disease-mechanism-evidence-map skill is designed to systematically maps mechanism evidence for a disease from molecules to pathways, cell types, tissues, biological consequences, and clinical phenotypes.

Primary Use Cases:

- Rapid understanding of disease mechanism architecture.

- Mechanism hypothesis building before study design.

- Disease introduction / discussion framework construction.

- Mechanism-oriented evidence synthesis before gap analysis.

- Mechanism-chain inspection for translational thinking.

If you would like to explore more details of this skill, you can visit the AIPOCH Disease Mechanism Evidence Map Skill Page.

Explore More AIPOCH Medical Research Agent Skills

You can explore more medical research skills in the AIPOCH Agent Skills Collection or access implementation details through the AIPOCH GitHub Repository.

Disclaimer

This AI-assisted article is provided for informational and research purposes only and does not constitute medical advice, clinical guidance, diagnostic recommendations, treatment decisions, publication acceptance recommendations, or formal scientific peer review decisions.

The comparisons and analysis presented in this article are based on standardized evaluation results from MedSkillAudit and are intended as structured references for understanding agent skill quality. They should not replace independent judgment from qualified researchers, reviewers, editors, clinicians, or healthcare professionals.

As this article includes AI-assisted interpretation and summary, there may be limitations in completeness, contextual judgment, and scenario-specific applicability. Readers should independently verify all biomedical, methodological, academic, and clinical conclusions before making research, publication, or medical decisions. Any reliance on this content is at the reader’s own discretion and risk.