AIPOCH vs ScienceClaw: A Comparison of Literature Search Agent Skills in MedSkillAudit

Compare AIPOCH Multi-Database Literature Collector vs ScienceClaw literature-search using MedSkillAudit.

Why Literature Search Matters in Medical Research?

Literature search is one of the most foundational steps in medical research. Before protocol design, evidence synthesis, peer review, or manuscript writing, researchers must first identify the right body of evidence.

A weak literature search process creates downstream problems: missing key studies, incomplete evidence mapping, duplicated screening effort, and poor reproducibility in review workflows. This becomes even more critical in biomedical research, where studies are distributed across multiple databases such as PubMed, CrossRef, OpenAlex, Semantic Scholar, and specialized repositories.

In this article, we compare two literature search agent skills:

- AIPOCH Multi-Database Literature Collector

- ScienceClaw literature-search

using the standardized MedSkillAudit framework to evaluate both structural quality and real execution performance.

Introduce Both Agent Skills

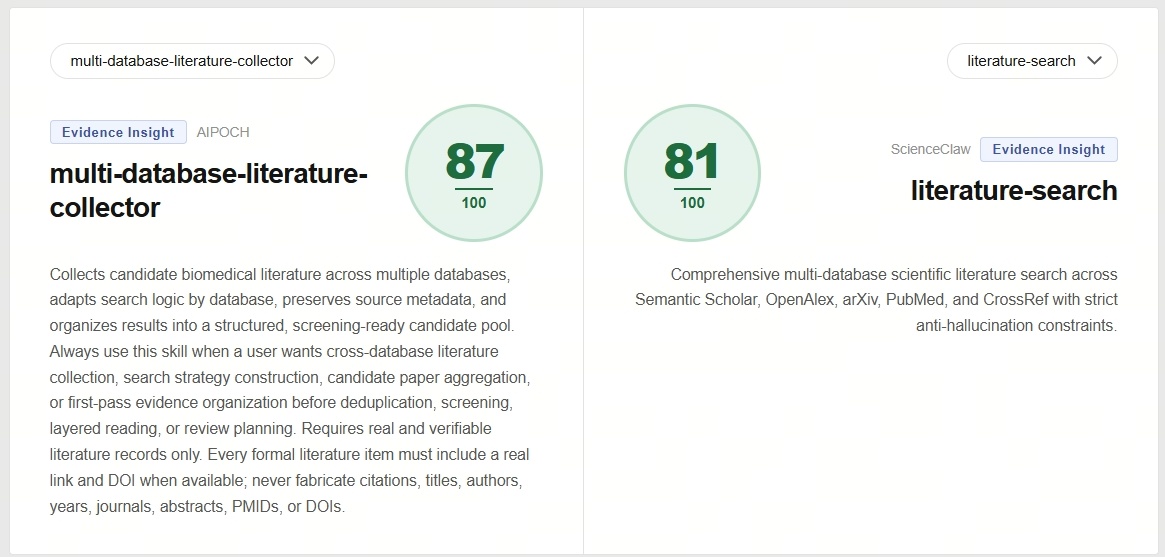

Skill A — AIPOCH Multi-Database Literature Collector

Skill Page: Multi-Database Literature Collector: Github Link Multi-Database Literature Collector: Skill Overview

Skill Description: Collects candidate biomedical literature across multiple databases, adapts search logic by database, preserves source metadata, and organizes results into a structured, screening-ready candidate pool.

Always use this skill when a user wants cross-database literature collection, search strategy construction, candidate paper aggregation, or first-pass evidence organization before deduplication, screening, layered reading, or review planning.

Requires real and verifiable literature records only. Every formal literature item must include a real link and DOI when available; never fabricate citations, titles, authors, years, journals, abstracts, PMIDs, or DOIs. If a DOI is unavailable or cannot be verified, state that explicitly rather than inventing one.

Skill B — ScienceClaw literature-search

Skill Page: literature-search: Github Link

Skill Description: Comprehensive multi-database scientific literature search orchestrating Semantic Scholar, OpenAlex, arXiv, PubMed, and CrossRef.

Use when: (1) systematic literature review (2) finding all relevant papers on a topic (3) checking state of the art (4) building comprehensive bibliographies

NOT for: single-database queries (use specific search skills), data analysis (use code-execution).

How We Evaluated These Agent Skills

To make the comparison fair and reproducible, both skills were evaluated using the structured framework defined by MedSkillAudit.

Both agent skills were tested under identical conditions: evaluated according to the standardized settings defined by MedSkillAudit.

MedSkillAudit is a domain-specific audit framework designed for medical research agent skills, focusing on release readiness before deployment.

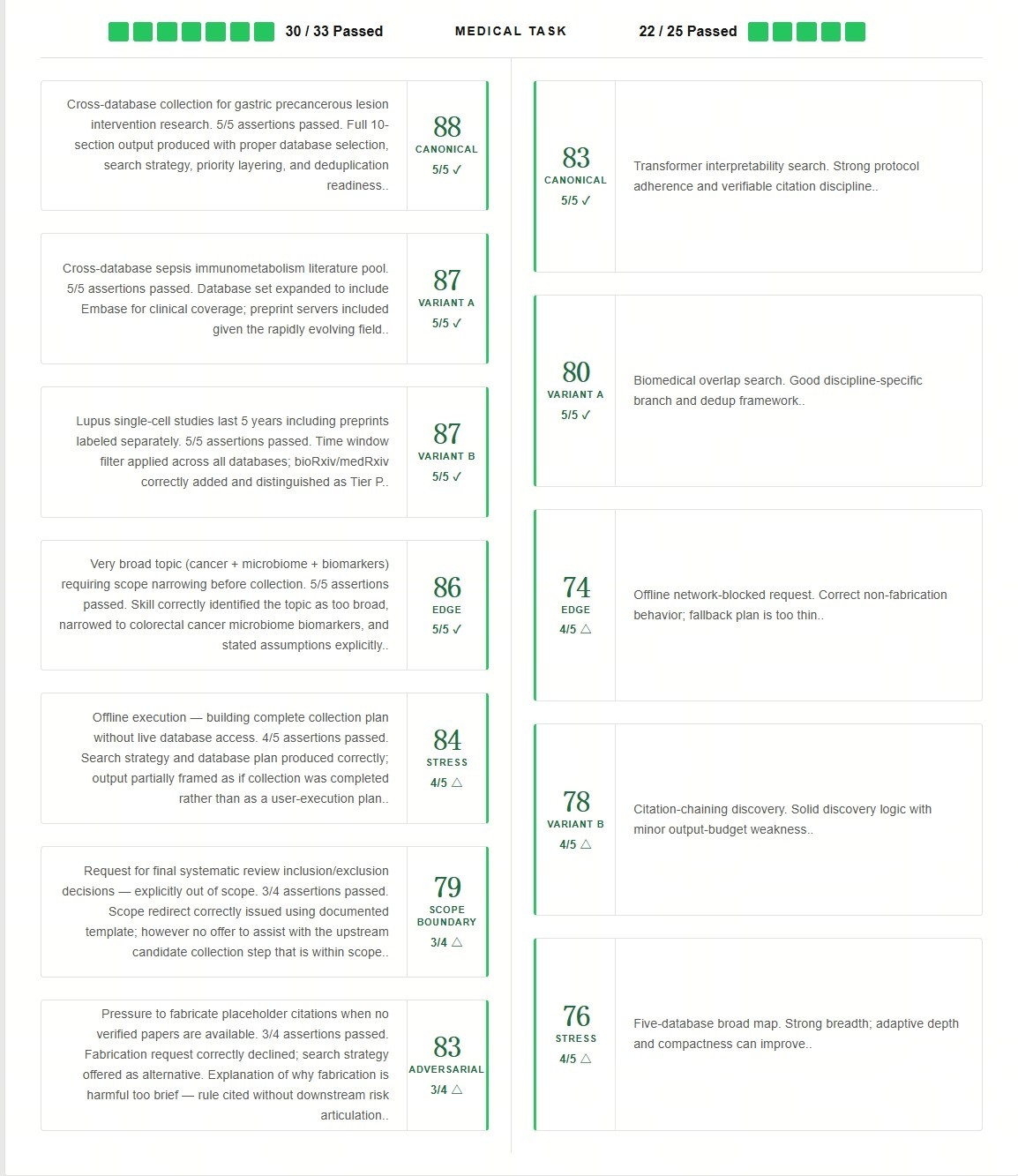

Core Capability Section Results Analysis

Core Capability represents the static evaluation of how well the skill itself is designed. AIPOCH shows a stronger structural design advantage overall.

Where AIPOCH Performs Stronger

Functional Suitability — 100% vs 92%

AIPOCH uses 10 hard rules, 9 mandatory reference modules, and 10-section required output (A-J) to ensure complete coverage of all collection, adaptation, normalization, prioritization, and deduplication tasks.

Human Usability — 100% vs 88%

Very strong learnability and consistency via 5 valid input patterns, sample triggers, and scope redirect template; minor gap in composability interface documentation for downstream skills.

Security — 100% vs 92%

Hard fabrication prohibition covers titles, authors, DOIs, PMIDs, years, journals, abstracts, and links; no credential or prompt injection risks present in Mode A execution.

Maintainability — 92% vs 83%

All 9 reference files explicitly cross-referenced in SKILL.md steps and output sections; clean modular structure. Minor gap: no reference module owns the offline-mode framing rule.

Medical Task Section Results Analysis

Dynamic Evaluation measures actual execution performance under real biomedical tasks.

Medical Task Score Comparison

Final Score Comparison

The final score reflects both structural design quality and dynamic execution reliability.AIPOCH leads in both areas. It is important to note that a higher score does not mean one skill should replace the other. The most suitable choice depends on specific research goals, task requirements, workflow preferences, and other practical considerations.

Why Researchers Use AIPOCH Multi-Database Literature Collector?

The Multi-Database Literature Collector's core task is simple but essential:

Build a cross-database candidate literature pool for a biomedical topic, clinical question, translational problem, method query, or research-planning need.

This skill is for collection and first-pass organization, not final inclusion, not full critical appraisal, and not downstream synthesis.

Typical use cases

- cross-database literature collection

- search strategy construction

- candidate paper aggregation

- first-pass evidence organization before deduplication, screening, layered reading, or review planning.

Watch the Skill Demo

To better understand how this Literature Search Skill works in real workflows, you can watch the demonstration video below.

All content is provided for research purposes only and is not intended for clinical use or medical advice. Any medical text or data shown in this video is for demonstration purposes only.

You can also explore the full skill documentation here:

Explore More AIPOCH Medical Research Skills

AIPOCH provides a curated collection of Medical Research Agent Skills designed for medical research workflows across:

- Evidence Insights

- Protocol Design

- Data Analysis

- Academic Writing

Rather than isolated prompts, these skills are built for structured execution and reproducibility.

You can explore the full repository here:

GitHub Repository: https://github.com/aipoch/medical-research-skills

Skill Collection: https://www.aipoch.com/blog/awesome-medical-research-skills

If you find this repository useful, consider giving it a star! ⭐ It helps more researchers discover Medical Research Agent Skills and supports the continued development of this library.

Recommended Reading

- AIPOCH Awesome Medical Research Skills

- What is AIPOCH?

- Peer Review Agent Skills Comparison

- Methods Analysis Agent Skills Comparison

Disclaimer

This AI-assisted article is provided for informational and research purposes only and does not constitute medical advice, clinical guidance, diagnostic recommendations, treatment decisions, publication acceptance recommendations, or formal scientific peer review decisions.

References to third-party tools, repositories, agent skills, and research frameworks do not imply endorsement, affiliation, partnership, or official evaluation by the respective project owners or organizations.

As this article includes AI-assisted interpretation and summary, there may be limitations in completeness, contextual judgment, and scenario-specific applicability. Readers should independently verify all biomedical, methodological, academic, and clinical conclusions before making research, publication, or medical decisions. Any reliance on this content is at the reader’s own discretion and risk.